An exclusive article by Fred Kahn

AI peer group comparison has emerged as a transformative force within anti-money laundering frameworks, leading to a major shift in how financial institutions detect illicit activity. Many organizations now look toward machine learning to move beyond traditional rules-based systems that often produce high volumes of false alerts. By utilizing these advanced methods, systems can identify suspicious outliers by evaluating an individual against a cohort of similar profiles. This technique provides a more granular view of transactional behavior, allowing for the discovery of complex patterns that standard monitoring might overlook. However, the effectiveness of these tools relies heavily on the integrity of the underlying information. As the global financial landscape becomes increasingly digital, the necessity for sophisticated surveillance mechanisms grows in tandem with the complexity of criminal tactics. Financial firms are now tasked with balancing the efficiency of automated systems with the ethical and operational risks inherent in high tech monitoring solutions.

Table of Contents

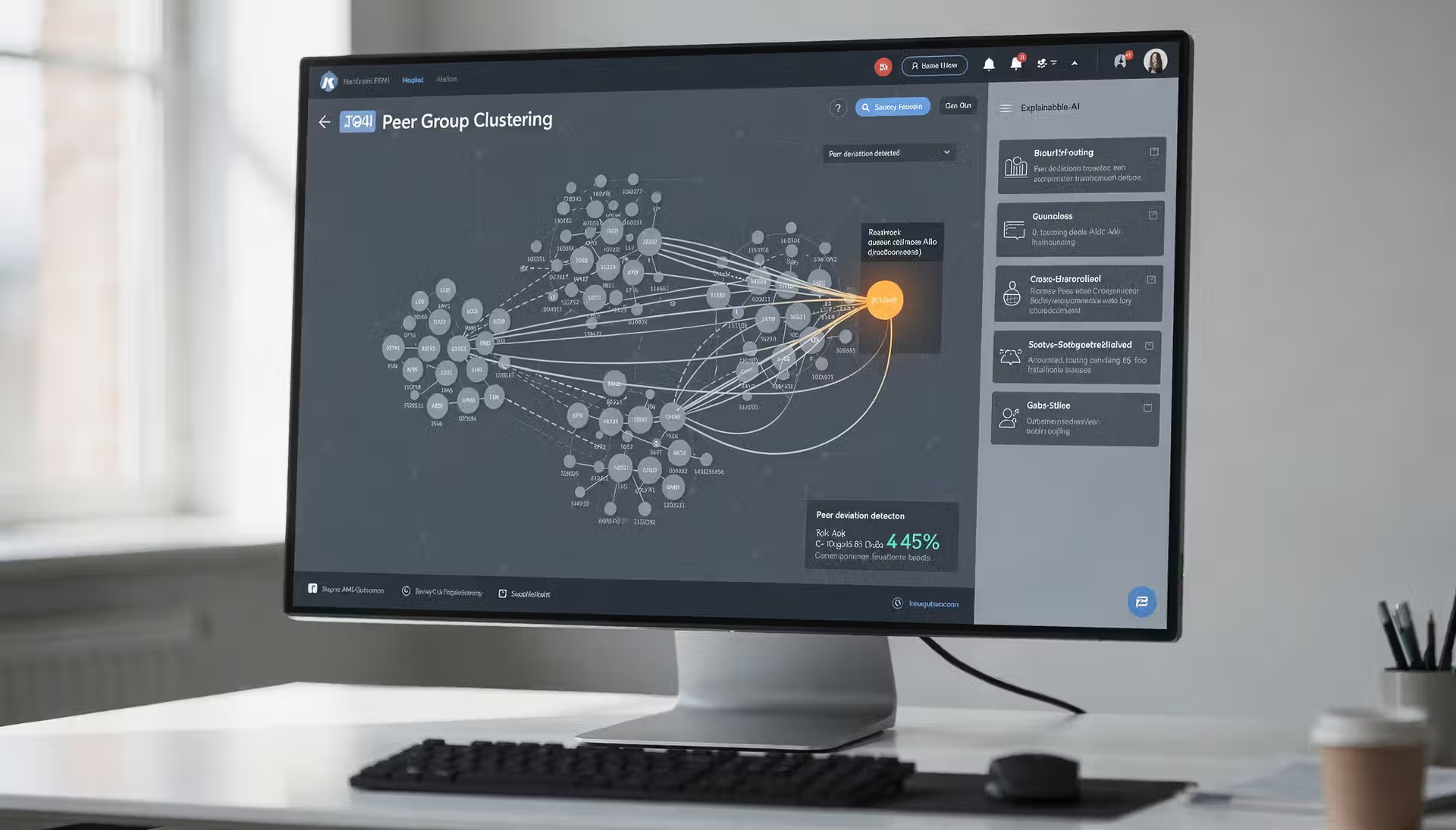

AI Peer Group Comparison

The adoption of peer group comparison through machine learning offers a transformative approach to detecting financial crime. Unlike static rules that apply the same thresholds to every customer, this technology segments users into clusters based on shared characteristics such as occupation, income levels, and typical spending habits. When a transaction occurs, the system evaluates it against the established norms of that specific peer group rather than a generic benchmark. This contextual awareness enables banks to reduce the number of irrelevant alerts, as a large transfer that is normal for a corporate entity would not trigger the same red flag as a similar transfer from a retail student account. By focusing on deviations from peer behavior, compliance teams can prioritize their resources on truly anomalous activities. This shift from manual threshold setting to dynamic behavioral modeling represents a significant leap forward in the ability to catch sophisticated laundering schemes. Furthermore, the ability of AI to process massive datasets in real time allows for a level of scrutiny that was previously impossible. This technology does not merely look at a single transaction in isolation but considers it as part of a broader narrative of financial movement. By understanding the typical economic footprint of a group, the machine can identify when a specific member begins to diverge in a way that suggests potential risk. This proactive stance is essential for modern financial institutions seeking to stay ahead of sophisticated criminal networks that frequently change their methods to evade detection. The granular nature of these clusters means that a professional athlete, a small business owner, and a high-frequency trader are all measured against relevant peers, ensuring that their unique financial lives do not trigger false suspicions. As algorithms become more adept at identifying these clusters, the reliance on rigid, antiquated monitoring systems continues to diminish, replaced by a fluid and responsive digital defense.

Modern Implementation of Clustering Technologies

Current advancements in the field have introduced a variety of specialized models that allow for real-time behavioral segmentation. Unsupervised machine learning models are widely used to discover natural groupings within customer data without the need for pre-existing labels of suspicious activity. These models utilize algorithms to identify hidden correlations between accounts that may span across different geographic regions or business sectors. Additionally, graph-based analytics have become a staple in modern transaction monitoring, enabling institutions to visualize and analyze the relationships between nodes in a massive financial network. By treating customers and accounts as interconnected entities, these systems can detect circular funding patterns and the use of intermediaries that traditional peer analysis might miss. Hybrid systems are also becoming more common, where machine learning outputs are integrated into existing rule engines to provide a layered defense. This allows for the speed of automation to be tempered by the stability of established regulatory thresholds. The use of natural language processing has further enhanced these systems by allowing them to ingest unstructured data, such as news reports and legal documents, to refine the risk profile of a peer group. Together, these technologies form a multi-dimensional approach to surveillance that is far more resilient than previous generations of compliance software. Modern platforms often utilize deep learning architectures to process not just transaction amounts, but also the velocity of money and the timing of transfers, creating a highly detailed map of expected behavior. These systems are designed to be self-correcting, meaning they can adjust their internal peer group definitions as macroeconomic conditions change. For example, during a period of high inflation, the system can automatically recalibrate what constitutes a normal grocery spend or utility payment for a specific demographic, preventing a surge in false positives across the entire customer base. This level of adaptability ensures that compliance efforts remain effective even in volatile economic environments.

The Impact of Data Quality on Automated Detection

While the benefits of comparative analysis are significant, the reliability of the output is strictly tied to the quality of the input data. Financial institutions often struggle with fragmented or incomplete records across different legacy systems, which can lead to the creation of inaccurate peer groups. If the information used to train the model is outdated or contains errors, the resulting clusters will not reflect reality. This leads to a phenomenon where the algorithm may fail to identify a genuine risk or, conversely, continue to flag legitimate transactions because the baseline for comparison was flawed from the start. High levels of data hygiene and regular updates are essential to ensure that the machine learning models remain effective in an evolving financial landscape. Poor data quality can stem from various sources, including manual entry errors at the point of onboarding or a lack of synchronization between regional branches. When an AI attempts to group customers based on faulty parameters, the entire logic of the peer group comparison collapses. For instance, if a high net worth individual is incorrectly categorized as a low income earner due to a data error, their standard investments will appear highly suspicious. This not only wastes the time of investigators but also damages the customer experience through unnecessary account freezes or inquiries. Therefore, the foundation of any successful AI implementation in transaction monitoring must be a rigorous data management strategy that prioritizes accuracy, completeness, and timeliness. Without a clean source of truth, the most advanced algorithms serve only to automate and accelerate existing errors. Data silos represent one of the greatest obstacles to achieving this goal, as information trapped in separate departments prevents the AI from seeing the full picture of a customer’s activity. To combat this, many firms are investing in centralized data lakes that provide a unified view of all client interactions, from mortgage payments to credit card swipes. Only with this holistic perspective can the AI accurately assign an individual to the correct peer group and monitor their behavior with precision.

Strengthening Oversight through Model Governance

To mitigate the risks associated with biased or insufficient data, a robust governance framework is necessary for any AI-driven monitoring system. This involves continuous testing and validation of models to identify and correct for drift in performance over time. Implementing a human-in-the-loop approach ensures that final decisions on suspicious activity reports are reviewed by experienced analysts who can provide the necessary context that a machine might miss. Furthermore, institutions should explore data pooling and collaborative analytics to broaden the scope of their training sets, which can help in building more representative and fair peer groups. Maintaining a balance between technological innovation and ethical oversight is critical for the long-term success of automated transaction monitoring. Governance should begin at the highest levels of the organization, with clear policies regarding the procurement, deployment, and auditing of AI tools. Periodic third-party reviews can offer an objective assessment of whether a model is functioning as intended or if it has begun to develop harmful biases. Additionally, training programs for compliance staff should be updated to include technical literacy, enabling them to understand the logic behind AI alerts rather than simply following a checklist. By fostering a culture of accountability, financial firms can harness the power of AI to create a safer financial system while minimizing the negative externalities of automation. The ultimate goal is a synergy where human intuition and machine processing power work together to create a more resilient defense against global financial crime. Beyond internal policies, international cooperation is becoming increasingly important, as regulators look to establish common standards for the use of AI in financial surveillance. These standards aim to ensure that while banks use advanced tools to protect their assets, they do not infringe upon the rights of their customers through opaque or discriminatory practices. As the technology matures, the focus will likely shift from simply detecting crime to ensuring that the methods used are as fair and transparent as they are effective.

Key Points

- Peer group comparison allows systems to detect anomalies by comparing individual behavior against similar customer profiles.

- The accuracy of machine learning models in AML is highly dependent on the quality and completeness of the training data.

- Biased data sets can lead to discriminatory outcomes and disproportionate scrutiny of certain demographic or geographic groups.

- Regulatory bodies emphasize the importance of model explainability and human oversight in the deployment of automated monitoring tools.

- Effective governance requires continuous validation to prevent model drift and ensure ethical standards are maintained.

Related Links

- FATF Report on the Opportunities and Challenges of New Technologies for AML and CFT

- European Banking Authority Report on the Use of Artificial Intelligence in the Financial Sector

- Wolfsberg Group Statement on Demonstrating Effectiveness in AML and CFT Programs

- Guidance on Financial Inclusion and Anti-Money Laundering Measures by the FATF

- Financial Crimes Enforcement Network Innovation Hours Program Overview

Other FinCrime Central Articles About The Impact of AI in AML

- Why One AI Logic Can Create 2 Different Biased AML Worlds

- Why AI Explainability Is Becoming a Regulatory Imperative in AML

- AI-Driven AML or Clever Rules Disguised as Innovation

Some of FinCrime Central’s articles may have been enriched or edited with the help of AI tools. It may contain unintentional errors.

Want to promote your brand, or need some help selecting the right solution or the right advisory firm? Email us at info@fincrimecentral.com; we probably have the right contact for you.